Tools for Data Analysis: Emergence of AI

Mary Jo Lamberti, PhD, discusses how AI is set to change the way data is handled and used in the pharmaceutical industry

Mary Jo Lamberti, PhD, research assistant professor and associate director of sponsored research at the Tufts Center for the Study of Drug Development, talks to contributing editor Tanuja Koppal, PhD, about artificial intelligence (AI) and how it’s set to change the way data is handled and used in the pharmaceutical industry.

Q: Tell us about your recent study and how you define artificial intelligence.

A: The Tufts Center for the Study of Drug Development (CSDD) recently partnered with the Drug Information Association (DIA) and eight pharmaceutical and biotechnology companies to form a working group. They sought to clarify and understand AI adoption across the pharma industry and to gather insights into the initiatives and activities included in the definition of AI. Members of this working group also provided input and feedback for a collaborative study, which included a survey that was sent out to lists of individuals interested in AI. Results from this study were published online in June and appeared in the August 2019 issue of Clinical Therapeutics.

We had a good cross-section of small, mid-sized, and big pharma represented in our survey and we received nearly 400 responses from more than 200 unique organizations. We found that there is no globally accepted definition for AI and some other terms. Since we wanted to tailor our definition of AI specifically to pharma, we interviewed key experts in AI, and with input from the working group, came up with some definitions to use in our survey. For our purposes, AI can be defined as systems or computational models meant to augment human cognitions. Algorithms augmenting human cognitions are a method in which machines identify complex patterns, trends, and relationships. We also developed definitions for machine learning, natural language processing, and computer vision for our study.

Q: What are some of the key factors that have led to AI-driven data analytics in the pharmaceutical industry?

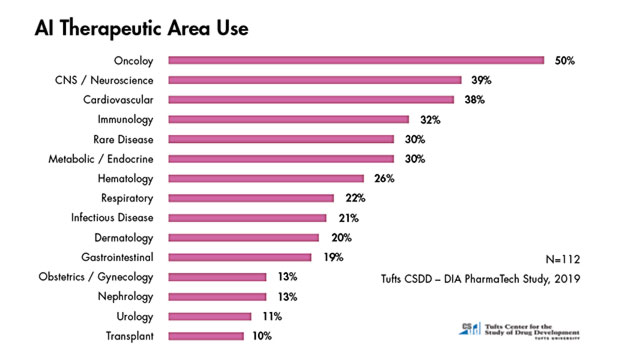

A: AI in pharma has been around for a while. However, it wasn’t readily accepted because it was a black box phenomenon. I think a lot of it was fear or mistrust of the AI methods, where you are teaching a system or computer to learn and do things, and not using humans. We did talk to and survey people in drug discovery, and they all said that increased competition and the need for speed and doing things more efficiently, especially in drug discovery, is why AI started to catch on. Companies also started using AI to look for strategies and approaches to hedge against drug development risk. AI was also used to drive precision medicine and to develop treatments faster, particularly for areas like rare diseases. Much of what is labeled as AI, but probably is closer to machine learning, is being applied to a broad range of areas including disease identification and diagnosis, drug discovery and manufacturing, radiology and radiotherapy, and electronic health records. Our survey found that the top therapeutic areas that use AI were oncology, CNS, cardiovascular, immunology, and rare diseases. We also found that AI use in companies was fragmented and in silos. Hence, the results showed that organizations varied in allocation of responsibility of AI. Forty-two percent indicated that they had no centralized responsibility and that various functions or departments, including individual business units, managed AI and new technologies.

The vendors we talked to said that they were providing a lot in terms of products and services, but people in pharma felt like what was being provided was not living up to their expectations. Hence, they were building home-grown systems and using vendors only as needed. The interviews and data analysis for this study were all completed in 2018 and we hope to do another study soon to determine what’s hype and what’s reality.

Data from the DIA PharmaTech Study, 2019.Credit: Tufts CSDD

Data from the DIA PharmaTech Study, 2019.Credit: Tufts CSDD

Q: What are some of the current hurdles to AI adoption?

A: The culture in big pharma has been conservative and risk averse, but that is all changing, and they are adopting the new methods. There has been a shift in mindset in terms of embracing AI. There are still some silos that do exist, but they are not as bad as it used to be 10 years ago.

However, it all comes down to the data—whether it’s the quality of the data or the source of the data. You need good quality, robust data in order to implement AI/machine learning. The top challenges to implementation of AI are lack of adequate staff and skillsets; the data structure—data that is too unstructured to adapt to AI use; and insufficient capital or budgets for investment in AI. Identifying the appropriate sets of data to enter into the AI model is also crucial. Staffing and hiring people with the right skillsets is a big issue. There are fewer bench scientists and more data scientists and computer scientists being added to companies now and we expect to see more AI in the future. Even though there are many challenges, the only way to keep up and succeed is to hire more people trained in AI and meet the needs within the workforce.

Mary Jo Lamberti is a faculty member at Tufts and leads multi-company sponsored and grant-funded research studies at the Tufts Center for the Study of Drug Development (CSDD) at Tufts University School of Medicine. She has extensive experience conducting research on pharmaceutical and biotechnology industry practices and trends affecting contract research organizations and investigative sites. She has been a speaker at industry conferences and has published articles in trade and peer-reviewed journals. She holds a BA from Wellesley College and a PhD in psychology from Boston University.