Computer Modeling and Simulation

Applications in chemistry, clinical, and pharmaceutical labs hold promise—with reservation

According to one author, modeling and simulation “live at the intersection between theory and experiment.”1 The model is an interpretation of current theory, while the execution of that model is a simulation of what the model designer expects to happen. This simulation can be extremely useful, because how well its predicted results compare with actual experimental results is a good test of whether you have accurately taken all factors relevant to the experiment into account.

Illustration 1: Role of Modeling and Simulation in Scientific DiscoveryOffice of Nuclear Energy.To help visualize the potential problems in designing and using models, consider the management of your laboratory. A good case can be made that you are not managing a laboratory, but rather the visualization, or model, of the laboratory that you hold in your mind. How well your management of this model works is a function of how well this simulation corresponds to reality and the criticality of any discrepancies.

Illustration 1: Role of Modeling and Simulation in Scientific DiscoveryOffice of Nuclear Energy.To help visualize the potential problems in designing and using models, consider the management of your laboratory. A good case can be made that you are not managing a laboratory, but rather the visualization, or model, of the laboratory that you hold in your mind. How well your management of this model works is a function of how well this simulation corresponds to reality and the criticality of any discrepancies.

It is very entertaining, in a schadenfreude sense, listening to group managers in informatics requirementgathering meetings confidently describe how the analyses in their group are performed, only to have an analyst hesitantly interrupt, stating that the described process is not the procedure they actually follow. Predictive models used in the laboratory are vulnerable to this same effect. These models can be very helpful, but it is imperative that you continually question their accuracy. The following sections illustrate some of the critical roles of modeling and simulation in the modern laboratory.

Chemistry laboratories

Among the simplest models that you are likely to encounter is the lowly calibration curve, particularly those in which the relationship between the value actually being measured and the concentration is linear. However, you are liable to encounter much more complex models and simulations even in a chemistry lab performing organic analysis. What has become almost a classic example is the modeling of a high-performance liquid chromatography (HPLC) system. It is not uncommon to have to develop a new analytical method to separate and quantify new organic compounds that the laboratory deals with. The classic way would be to run a plethora of experiments under all of the potential conditions, but there are obvious drawbacks to this. Principally, consider how many experiments you would have to run to cover all of the potential analytical conditions and the corresponding time and money involved.

To list just a few, your potential variables include the type of separation column, the solvent used, whether the nature of the solvent varies over time, whether the temperature of the solvent varies over time, the rate of flow of the solvent, sample injection size, and identifying the optimal detector and potentially the optimal optical frequency of the detector. Because the group of samples you would need to run would increase factorially, it should be obvious that developing this new method would require a significant investment of time, patience, and, oh yes, money!

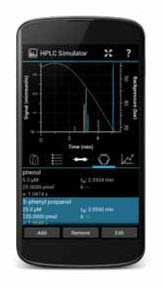

Illustration 2: Android HPLC SimulatorRegents of the University of Minnesota.Fortunately, due to the significant research on how HPLC systems work, it has been possible to develop models of their operations, and it is feasible to run multiple computer simulations to identify the optimal set of analysis conditions. This will still require the analysis of samples under different conditions, but only a handful, compared with what you would otherwise have to run. A good caveat to keep in mind is that there are multiple ways of modeling for HPLC optimization. For those interested in getting a feel for the history and approaches for HPLC modeling, this paper from Chromatography Today2 would probably be a good place to start.

Illustration 2: Android HPLC SimulatorRegents of the University of Minnesota.Fortunately, due to the significant research on how HPLC systems work, it has been possible to develop models of their operations, and it is feasible to run multiple computer simulations to identify the optimal set of analysis conditions. This will still require the analysis of samples under different conditions, but only a handful, compared with what you would otherwise have to run. A good caveat to keep in mind is that there are multiple ways of modeling for HPLC optimization. For those interested in getting a feel for the history and approaches for HPLC modeling, this paper from Chromatography Today2 would probably be a good place to start.

While many HPLC system and column manufacturers have developed their own proprietary software for analysis optimization, there are a number of free programs available on the internet to perform this optimization. To emphasize that you do NOT require access to a supercomputer to perform these optimization calculations, HPLCsimulator.org, a designer of simulator and training tools, has an app version, HPLC Simulator for Android.3

Clinical laboratories

Clinical laboratories, whether freestanding or integrated within a hospital or clinic, can also profit from simulation and modeling. Researchers at Stanford University have recently (July 10, 2018) announced the development of a computer program, Decagon, which uses graph convolutional neural networks to predict potential side effects when two drugs are taken at the same time.4 The concerning thing is that in many cases, these drugs have never been administered together, so no one knows which side effects, if any, might occur due to their interaction. The scary thing is that the drugs might be prescribed by different doctors who have no idea that the patient is on another medication.

According to this research group, the number of known side effects is currently around 1,000, while the number of drugs on the market is currently approximately 5,000. From simple statistics, this means that there are over 125 billion possible side effects among possible pairs of drugs.

Decagon uses a database of all known side effects for each drug, all known protein-protein interactions in the body, and all known drug-protein interactions as a starting point to feed its deep-learning algorithm into a supercomputer.

Once this knowledge base is generated, Decagon can project potential side effects from binary drug interactions on a much simpler computer, even if those drugs have never been used together or if a specific side effect has not been observed with either drug. As a test of the accuracy of the system, its top 10 predictions, which had not been entered into its database, were cross-checked against the literature and, so far, at least five of them have been confirmed. Unfortunately, Decagon can make projections for only binary drug combinations, while the United States Centers for Disease Control estimates that 23 percent of all Americans took at least two prescription drugs in the last month and that 39 percent of those over 65 years of age took five or more.

Pharmaceutical laboratories

Pharma labs may actually benefit the most from modeling and simulation, as they attack both ends of the labs’ product streams. As we gather more information as to how and why a particular drug works, we can build models to predict the behavior of new molecules, allowing lab staff to design new drugs with potentially fewer side effects and increased effectiveness. At the same time, as we learn more about how cancerous cells evade the body’s immune system, it is possible to design drugs to neutralize this capability. Recent research has allowed the design of molecules that block the sites on some cancer cells that tell the immune system not to attack them. While this approach is highly specific, it can also be extremely effective. In one promising trial, the experimental drug did nothing for 80 percent of the test population, but the other 20 percent underwent a complete remission, with no residual cancer cells detected in their bodies.

Also accelerating the delivery of new drugs is a greater willingness of regulatory agencies worldwide to allow, and even encourage, a more prominent role for modeling and simulation in clinical trials, potentially reducing the number of trials and/or the number of people involved in the trials. Some of this willingness is due to ethical concerns, while some is due to increasing faith in the accuracy of the models and resulting simulations.

Ending caveat

From these examples, it is clear that modeling and simulation do have a strong role to play in terms of scientific discovery. However, there are potential hazards to relying too strongly on modeling and simulation without confirmatory experiments. It is the nature of models to reflect the assumptions and factors that go into their design. While many models have been shown to work well, often the models are built on observed physical characteristics, rather than a full scientific understanding of why those properties behave as they do. Frequently, this potential failure point is amplified by a belief that we know more about how a process works than we actually do. This can occur in any field, but is particularly discernible in relation to medicine, in which new layers of subtle interaction are continually being discovered. Without that fundamental knowledge, it is easy to encounter an unseen trip wire consequent to a factor unconsidered in the model, as an outcome of it happening to remain constant in the samples used to build or train the model.

As others have stated, “Modeling and simulation should never be viewed as a substitute for real-world experimentation.”1

As a final thought, it is worth keeping in mind this quote from Nobel physicist Richard P. Feynman: “If you thought that science was certain— well, that is just an error on your part!”

References:

1. Office of Nuclear Energy. Role of Modeling and Simulation in Scientific Discovery [Internet]. Energy.gov. 2013 [cited 2018 Aug 15]. Available from: https://www.energy.gov/ne/articles/role-modeling-and-simulation-scientific-discovery

2. Molnár I, Rieger H-J, Kormány R. Chromatography Modelling in High Performance Liquid Chromatography Method Development [Internet]. Chromatography Today. 2013 [cited 2018 Aug 14]. Available from: https://www.chromatographytoday.com/article/bioanalytical/40/imre_molnr_hans-jrgen_rieger_rbert_kormny/chromatography_modelling_in_high_performance_liquid_chromatography_method_development/1387

3. hplcsimulator.org - the free, open-source HPLC simulator [Internet]. hplcsimulator. org. 2018 [cited 2018 Aug 14]. Available from: http://www.hplcsimulator.org/

4. Wiggers K. Stanford researchers develop AI that can predict pharmaceutical drug interactions [Internet]. VentureBeat. 2018 [cited 2018 Aug 13]. Available from: https://venturebeat.com/2018/07/10/stanford-researchers-develop-ai-that-can-predict-pharmaceutical-drug-interactions/