BIOVIA Pipeline Pilot simplifies the building, deployment, management, and reporting of complex machine learning models and advanced analytics for sciences

BIOVIA Pipeline Pilot simplifies the building, deployment, management, and reporting of complex machine learning models and advanced analytics for sciences

Problem: Answering the question “What do I test next?” is a fundamental challenge for any sort of research lab. It guides the flow of research from hypothesis to discovery. Data streamlines this path, answering some questions and proposing new ones; the challenge is prioritizing which question to answer first. Your next steps are often determined by the data that is most accessible, limiting the scope within which you can make a decision. Making more data available and adding context to an experiment (e.g., “What was the ambient temperature when I ran this test?”) can help create new advances in the internet of things (IoT), but simply adding more data is not a complete solution. We need a way to process larger amounts of data more quickly and efficiently, yet we are reaching the limits of what can be done using traditional approaches alone.

Prescriptive analytics offers a way forward. This “prescriptive” approach provides the next step toward advanced analytical techniques. Rather than just a prediction that answers the question: “What will happen if I do this?,” prescriptive analytics suggests answers to the question: “How do I make this happen?” The application of machine learning to this methodology can further refine models by improving the quality of the “suggestions” as new data becomes available. For example, a model could look at a given experiment and, basing its decisions on previous similar experiments, suggest the next test (e.g., “Measure Protein A’s efficacy between pH 6.3 and 6.8 in intervals of 0.05 rather than from pH 6 to 7 in intervals of 0.1.”). The model might also point out potential errors in the experiment (e.g., “Ambient temperature in the testing room was five percent higher than previous experiments.”).

However, building complex models that can provide actionable, “prescriptive” suggestions requires deep technical knowledge and expertise. Data science is an “art.” Experienced modelers often deal with intricate problems, balancing multivariate systems and weighing the impact of different factors on a prediction. You have to know what questions to ask and how to ask them. You also have to design, build, train, and validate each model to ensure high quality results. This is especially true given the specialized formats and applications of scientific data. Powerful, scientifically-aware tools can help to streamline model building, deployment, and management, while also ensuring that best practices are captured and shared across the organization.

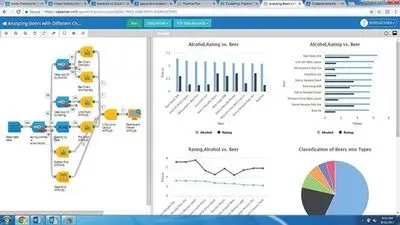

Solution: BIOVIA Pipeline Pilot is a graphical scientific authoring application that provides a comprehensive environment for the design, training, validation, and deployment of advanced analytics. It utilizes a drag-and-drop graphical user interface to facilitate the rapid creation of models, and each node, called a “component,” allows common code to be shared throughout the organization. Expert users can create their own components and integrate them with commonly used languages such as Python and R to capture their best practices. Pipeline Pilot’s native collection of model learners and validation tools simplifies the creation of complex machine learning models. The application also offers a wide range of APIs to facilitate seamless integration with a variety of instruments and third-party software, automatically extracting data for analysis.

Pipeline Pilot streamlines the deployment of models to the broader organization, effectively democratizing the benefits of prescriptive analytics. Models can be deployed as “robots,” automatically processing data as it is created or running scheduled tasks on custom time intervals. They can also be deployed as widgets or web services, simplifying the experience for end users and freeing up IT resources for more value-adding tasks. Pipeline Pilot provides the necessary tools for organizations to incorporate prescriptive analytics into their science and every aspect of their R&D workflows.

For more information, please visit http://3dsbiovia.com/products/collaborative-science/biovia-pipeline-pilot/