What’s at stake if you’re not assessing risks in the lab? What if a researcher scales up an energetic compound ten-fold? If this experiment were to kill someone, how would it happen? How can one assess whether they’re using enough controls to mitigate risks?

Things go awry in life and in labs; it’s part of human nature. Conscious of it or not, humans assess risks as part of a process—take driving, for instance. Drivers try to forecast how others will react in the moment. It's a constant, tiring process. So, the brain creates mental shortcuts and is capable of intuitive, fast thinking to drive without doing it step by conscious step. While vital in driving, this doesn’t work as well in labs.

Contributing factors of risk

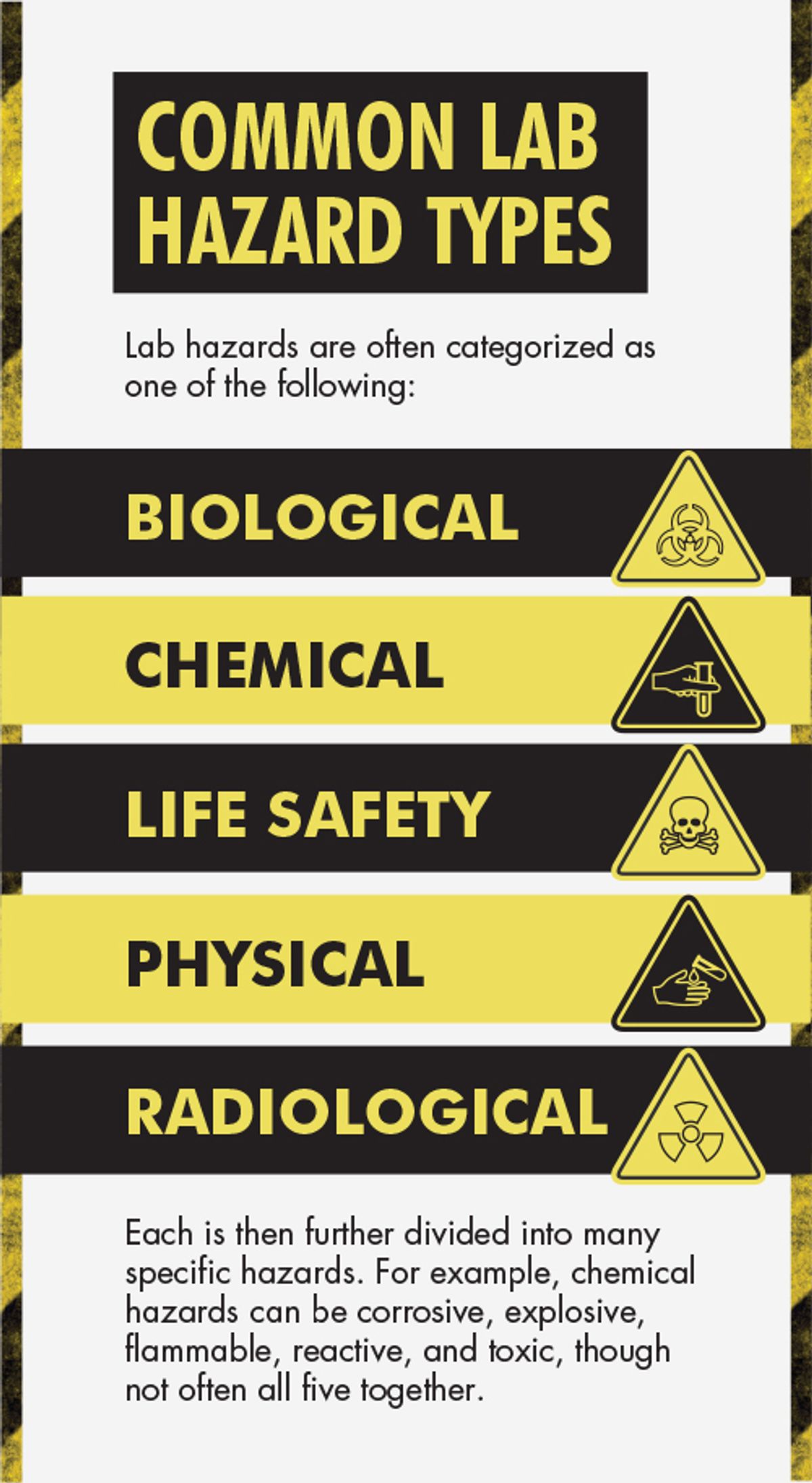

What is risk, and why is it so critical to assess and embrace its concept and usefulness? Whereas safety is a binary idea (i.e., safe vs. unsafe), risk has two or three factors (depending on context), making it more purposeful and easier to discuss. The three factors of risk are severity of consequence, exposure to the hazard, and probability of outcome. These factors provide plenty of space to have open conversations about risk assessment and our differing views. When failing to discuss or assess risks, lab incidents often occur with poor outcomes (e.g., loss of life or health, broken equipment, lost data and time, etc.).

Research practices that factor into risk assessment include working independently, experimental changes, and a drive toward cutting-edge innovation. All are typical and often necessary, yet they can contribute to risk.

Human decision-making plays a strong role with cognitive biases (e.g., confirmation bias, bias of optimism, etc.), mental shortcuts (i.e., heuristics), threat to value, and fast-thinking decisions. These are all part of human nature that, along with unawareness, also add to risk.

There are many risk-assessing tools, systems, techniques, and programs you can use. Each has its own purposes, benefits, and limits. Being familiar with several can help make risk assessments easier and clearer. It’s more important to be using at least one than finding the perfect one.

Risk tools

A common approach is the two-factor risk matrix (figure 1). Risk can be as simple as severity times probability. The risk ratings are helpful to discuss and sort risks into low, medium, and high groups. Benefits include its simplicity and visualizing it as a matrix. Two challenges are getting consensus on probability ratings and the omission of the third risk factor—exposure.

A three-factor risk formula is risk = severity (of impact) x exposure (to the hazard) x likelihood (of outcome). This adds the third factor of exposure, which can be an individual (e.g., riding a bike) or a group (e.g., all bicycle commuters). One challenge is creating a useful diagram showing three x, y, and z axes. Another is how to assess exposures. Again, using biking as an example, one is much more exposed to the hazard (i.e., traffic) when biking in the road than in a bike lane. Also, one is more exposed on busier streets than on quiet neighborhood roads.

Some risk assessing tools or techniques reverse engineer a root cause analysis (RCA), a common incident investigation technique (not without its own limits). One example of RCA is “Five Whys” in which we keep asking “why?” until we find a root to the incident’s cause. We can work backwards by instead asking “how” it could be caused until we find one or more ways, similar to a “what if” approach.

In “what ifs”, we might brainstorm various aspects going poorly. Examples could be what if the bottle breaks, what if a glove has a pinhole, or what if the eye wash doesn’t work? Each is assessed, rated on severity and odds, and addressed. We might examine how easy the bottle is to hold, check if the gloves have any pinholes, and verify the eye wash working properly with no obstacles in our way.

Recognize, assess, minimize, prepare (RAMP) is a widely used risk assessing process made up of the four words as a series of steps. Its stepwise approach follows a safety approach to hazards—recognize, evaluate, and control.

Many organizations with labs have some type of Lab Hazard Assessment Tool (LabHAT) or Lab Risk Assessment Tool (LabRAT). Most LabHATs are several pages long with boxes to check (e.g., hazards, PPE, etc.). Some allow helpful descriptions that provide details and context, though how they are analyzed isn’t always evident on the form. Some LabRATs are more decision-based or provide guidance on hazard controls. A better approach can be a brief spreadsheet-based algorithm that helps calculate and add up hazards versus subtracting for controls implemented.

Pre-mortems are a method of pre-determining what might go so wrong that someone (or the project) could die. Ask the question, “If we were to die what would’ve killed us?” It’s an effective tool and question to get people considering the ultimate risk—one's life.

Failure Mode and Effects Analysis (FMEA) is a method that examines each individual part of a system for its possible failure points. Odds of failures can be broadly used, or numerical ratings can be applied.

When failing to discuss or assess risks, lab incidents often occur with poor outcomes.

Fishbone (aka Ishikawa) diagramming is a useful holistic way to diagram differing factors contributing to a process (like an experiment). These often include people, methods, materials, the environment, etc. The diagramming makes it look like a fishbone.

Process hazard analysis is often used in industry to analyze an entire process and can involve several of the other methods listed here. It can be in the form of a flow chart, a table, or other graphics.

Fault tree analysis is a diagrammatic approach using logic gates (e.g., if/then, or, and, not, etc.) that helps show and detail how a process will flow based on what occurs within it, and by outside agents such as humans.

Pareto charts use bars and a line to denote how frequently something occurs, such as an error in judgment, measurement, etc. It’s common in quality systems, making it a good fit for labs.

A discussion on our risk perceptions is another technique to help lab staff and others engage in useful conversations on how each view the risks differently. One’s risk perceptions are right for them but perhaps don’t equate with others’ perceptions. Openly comparing differences can make for productive dialogue.

An overlooked yet powerful risk communication tool is storytelling. Stories are sense- or meaning-making tools that originally helped us survive 100,000 years ago. Storytelling, with all its nuances, plot, characters, and emotions, can provide helpful context and greater understanding among lab group members. There are tribes that will tell stories around the fire after the day’s work is done to iron out conflicts, bond, relate, and celebrate their challenges and deeds.1,2 Sharing stories on lab incidents, close calls, and one’s risk perceptions facilitates active engagement like no other technique.

Key takeaways

There are many risks in labs that can be assessed using one or more effective tools or techniques. Our risk systems, cognitive biases, and heuristics cause anyone to make odd and, at times, bad decisions. These risk assessing methods and programs help us be more objective, critical thinkers, explore probabilities, and demonstrate our due diligence.

It’s beneficial to review them and decide which one(s) to use. We often need more than one. Choosing to use one or more is key; finding one that works in each case is best. Which one should you use? The ones that you’re most likely to use, understand, and benefit from. However, going without any or going it alone is not an option and the probabilities of bad outcomes will eventually catch up in the lab.

References:

1. Smith, Daniel et al. “Cooperation and the evolution of hunter-gatherer storytelling.” Nature Communications. Vol. 8, issue 1, pp. 1853. Dec. 2017. DOI: https://doi.org/10.1038/s41467-017-02036-8. https://www.nature.com/articles/s41467-017-02036-8.

2. Wiessner, Polly. “Embers of society: Firelight talk among the Ju/’hoansi Bushmen." Proceedings of the National Academy of Sciences. Vol. 111, issue 39, pp. 14027-14035. Sept. 30, 2014. DOI: https://doi.org/10.1073/pnas.1404212111. https://www.pnas.org/doi/10.1073/pnas.1404212111.