iStock

iStock

Periods of technological disruption are fraught with existential uncertainty. When the blacksmith Hephaestus of Homeric myth forged his automatons and directed them to produce weapons for the Olympian gods, it was symbolic of a 3,000-year-old worry that the human worker would, imminently, be replaced. Labor automation has always been a polarizing subject, approached alternately with hushed caution and with a tendency to rant and rave, to smash and rend.

Although the godfather of machine tools—the lathe—came into widespread use by the 13th century, automation did not begin in earnest until the dawn of the Industrial Revolution. Advances in milling technology allowed the first continuous rolling of paper and sheet metal with minimal human interference. Later innovations in textile manufacturing presented the Luddites with their impending Armageddon, and they exacted futile vengeance on the mechanized surrogates of their human supervisors. As the tractor replaced the horse-drawn plow and the car cashiered the coach-andfour, thousands of the heirs of Hephaestus were suddenly jobless. As demand in more modern, consumer-driven industries outstripped the capacity of available labor to fulfill it, automation crept or blundered into those industries. And yet, human labor continued on. Moreover, some futurists predicted a 20-hour work-week (note: be wary of those who claim that job title). With an ever-greater workload (alas), labor continued robustly into arenas thought safe from automation in which educations are highly intensive and skill sets are highly specialized—laboratory science, for example.

The mill, the mechanized assembly line, and even the computer are at odds with the concept that scientific inquiry can be automated. The gathering of observations that form the basis of inductive principles upon which hypotheses are built is necessarily a sensory process, poorly adaptable to circuitry. Although most hypotheses are more realistically ex post facto rationalizations, the computational power of minds in collaboration would still seem to boggle the capabilities of silicon and wire. But perhaps we are arrogant in our specialization, thinking that we can’t or shouldn’t outsource our discoveries to machines. Rather, while we hold the processes of observation and hypothesis with the grip of the righteous, automation has clearly enabled and improved experimentation for many years and continues to do so in ways ever more significant.

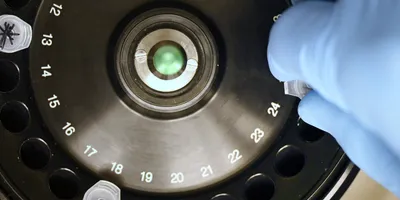

Fundamentally, automation in experimentation is an adaptive response to throughput. Secondarily, research science suffers from a noted crisis in reproducibility. With the rapid progression of the quantity and quality of genomics (and other -omics) data through continually evolving next-generation sequencing platforms, throughput has accelerated exponentially. Now, normal human capabilities cannot handle the hands-on workload, and human interference can skew results unacceptably. Similarly, robotics commonly substitutes for human hands whenever possible in deposition of reagents for high-throughput screening studies and for sensitive PCR-driven characterizations of polymorphisms in rare and important cancer cell types. Because of expense, expertise, and convenience, we delegate much of this type of work to dedicated core facilities. However, as costs decrease through innovation and common use, as journals more frequently require in-depth analyses of metadata, and as constrictions in budgets and laboratory space result in streamlined staffing solutions, automation will increasingly move to the individual bench.

Two experimental techniques, CRISPR gene editing and induced pluripotency, have recently shattered the perceived boundaries of biomedical research. The adaptation by Jennifer Doudna, Emmanuelle Charpentier, and others in 2012 of a bacterial immune response to impact eukaryotic DNA excision and repair creates rapid and heritable precision changes in gene expression. Induced pluripotency, introduced by Shinya Yamanaka and colleagues in 2006, converts ordinary somatic cells to multipotential induced pluripotent stem cells (iPSCs) via expression of specific transcription factor genes. These techniques have moved frontiers outward at an alarming rate and provided an almost tangible finish line for the goals of genetic and regenerative medicine to devise permanent cures. However, the conceptual pipeline for such an effort is intrinsically daunting. For example, characterizing the genotype-to-phenotype relationship within a particular cancer cell may require many more CRISPR permutations and combinations than human hands can achieve. Automation of as much of the process as possible—from target identification to transfection to analysis—is crucial in terms of efficiency and consistency. Accordingly, corporations, startups, and organizations have begun to optimize CRISPR automation systems. Underlying much of this effort is the increasing importance of microfluidics.

Related Article: Transformation Through Digital Technology

Microfluidics facilitates integration of many different laboratory procedures, in stepwise, parallel, or simultaneous fashion, in miniature onto a handheld device such as a chip or flow chamber. One variation, digital microfluidics (DMF), employs devices that control individual droplets over an open array of electrodes and directs them to move, split, combine, and dispense from reservoirs in sub-microliter volumes. Laboratories have already automated workflows using DMF in several ways, including: 1) quantitative analyses of enzymatic reactions, with accurate kinetics in the absence of hands-on delays; 2) simultaneous DNA ligations to generate molecular clonal libraries; 3) improved workflow in sample preparation and analysis in clinical diagnostics; 4) elimination of time- and reagent-consuming steps in proteomics studies; and 5) cell sorting via electrical, optical, or magnetic force inside droplets. Conceptually, DMF systems can establish miniaturized cell cultures and apply unique CRISPR library conditions to each one. Gene knockdowns and resulting phenotypes can be evaluated on-chip, marking an improvement from first-generation microfluidics chambers, which require removal of cells. Adaptations of DMF to CRISPR and other techniques are being accomplished in a DIY manner by research groups looking to augment capabilities at the scale of projects rather than of industries. Therefore, some emerging devices are relatively low cost and there is a transparency and an open-source culture in data sharing and technology. In keeping with that spirit, there are several interrogative tools such as CRISPRdisco, an automated algorithm to identify and analyze CRISPR repeats from thousands of publicly available genome assemblies, which also has the potential to identify novel CRISPR cleavage systems. With the controversial battle for ownership of CRISPR-related intellectual property seemingly over, the effort to establish an automated commercial CRISPR-cell pipeline led by Horizon Discovery, in partnership with Solentim and ERS Genomics, is at the forefront.

Microfluidics can also ostensibly automate creation, validation, and study of novel iPSC lines from biological or patient samples. However, there is a problem of scale, with the number of cells in a microdroplet probably inadequate for therapeutic purposes. The New York Stem Cell Foundation, with its Global Stem Cell Array, is seeking to optimize scalability and throughput via automation with an eight-step robotic workflow that proceeds from thawing of somatic cells through reprogramming, sorting, clone selection, culture expansion, and freezing. Additionally, Panasonic has a joint venture with the University of Kyoto for a commercial automated iPSC culture system, and Evotec has partnered with Harvard to build an iPSC-based industrialized drug-screening platform. These systems are potentially adaptable to iPSC differentiation into organoids and other derivative cultures that can take many laborintensive months to cultivate and validate. Comprehensive automation may relieve common flaws and inconsistencies in iPSC studies ascribed to the sensitivities of cultures that typically require daily maintenance and observation. With automation, humans can get their weekends back.

In that extra time, investigators and technicians will soon be able to take much better notes just by saying them to a laboratory digital assistant. The first of these devices, LabTwin, is commercially available and is already initiating partnerships with universities and institutions. (Not by itself. Not yet.) Its machine-learning platform allows voice-activated note-taking, access to standard protocols, and centralization of laboratory inventory and reagent lists. A slightly older, more familiar digital assistant has already served basic functions in scientific settings, with the ability to set multiple timers and access rudimentary information such as molecular weights and boiling points. Since its introduction, end users have modified Alexa software to augment its capabilities, and the most successful of science-oriented modifications has led to a partnership between a software developer trying to help his scientist wife and the world’s largest corporation. The resulting product, HelixAI, is in development stages, but it promises to improve efficiency and reproducibility through realtime availability of stepwise protocols and individually customizable scientific reference information. It also aims to optimize safety and use of space through add-ons such as access to chemical data sheets and a mapping feature that can identify bottlenecks in laboratory floor plans.

Ideally, as laboratory digital assistants progress in their capabilities and there is more vigorous competition in what is yet an inchoate and exiguous field, they will foster a new era of efficiency in experimentation, record keeping, and communication. For instance, the future duties of an incoming lab manager may include taking mobile screenshots of old laboratory notebooks and protocol sheets and syncing them to a centralized AI-powered database that employs handwriting recognition technology and can make the information immediately available to all group members. Additionally, if software is standardized and made reciprocally compatible, different types of data could be accessed at will through individual devices acting in the manner of servers, rather than having to move from computer to computer with a flash drive.

Even in the face of all this potential, there is also cause for concern. Consumer and societal trust of the tech titans likely to either develop or acquire this technology is at its nadir. Unpublished data and clinical information are highly sensitive and necessarily proprietary, and reports of home devices monitoring private conversations underline that mistrust. Heightening this concern is the frequent bug-filled nature of first-generation technology, which may make precious data more susceptible to theft or loss. Moving forward, scientific digital assistants should, at minimum, show immediate outof- the-box competence for the features they claim. Finally, though, let the optimists among us rejoice, however conditionally. The 20-hour workweek may be upon us after all.