A lab’s efficiency revolves around workflow—how samples get prepared and data get collected, analyzed, and interpreted. An effective workflow even impacts the reproducibility of data in the lab, because the right system ensures that something gets done the same way every time. The tricky part is that most labs need some customizing of their workflow, something that fits just right with their research objectives and personnel.

The best approach to improving a lab’s workflow comes from a variety of approaches, and some depend on the kind of lab. When asked for two top suggestions for improving the workflow in a small academic lab, Lisa Thomas—senior director, marketing, life science mass spectrometry at Thermo Fisher Scientific (Waltham, MA)—goes with quality control and designing a scalable process from the start.

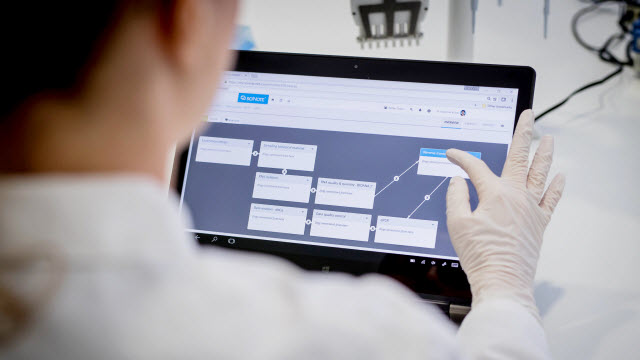

An electronic lab notebook helps scientists keep processes and information organized, which improves a lab’s workflow.Image courtesy of sciNote

An electronic lab notebook helps scientists keep processes and information organized, which improves a lab’s workflow.Image courtesy of sciNote

For quality control, she says, “Implementing off-the-shelf certified calibration solutions in any method you develop and potentially plan to run routinely even in a research setting is just good laboratory practice.” Calibrated solutions can save time and add accuracy in many technologies, such as liquid chromatography (LC) and mass spectrometry (MS). As an example, Thomas notes, “Thermo Scientific Pierce Calibration Solutions for mass spectrometry are ready-to-use liquid formulations that can quickly aid in calibrating our LC-MS instrumentation and help determine when something isn’t right.”

In addition to calibration standards, quality control involves many other elements. For instance, Thomas encourages scientists to use standards for sensitivity assessment, for determination of digestion efficiency, or as a control for sample analysis. These standards increase the odds of experimental success, and they can reduce variability compared with using some do-it-yourself approaches. “High purity, validated mobile phases, and acidic ion-pairing agents are essential for achieving effective and reproducible liquid chromatography separation of peptides for electrospray ionization MS,” Thomas explains.

Seeking scalability

The complexity of the research environment—not just planning and running experiments but also finding ways to fund them—makes it easy to get tunnel vision, focusing only on the job at hand. Given that funds come with limits, keeping workflows running as smoothly as possible requires some thinking ahead.

If a workflow fits only a lab’s current needs and provides no room for growth, any need to increase throughput or handle more samples could require going back to the drawing board, reworking the entire process from scratch. For example, a method could be able to increase in scale if it includes some automation from the start. Then, if a lab needs to run more samples, the automation can be adjusted without changing the entire process.

Tools for factoring in the future already exist, and the options keep increasing. “More vendors offer scalable solutions, like the Transcend II HPLC System, where you can incrementally add up to four channels as demand rises,” Thomas points out. “Or look for innovations that enable plug-and-play modular configurations to a single mainframe, like the Trace Series gas chromatograph, which enables users to rapidly change or add injection and detector options to suit their testing needs or simply keep their systems online.”

Sometimes scientists just don’t know what will do the most to improve a workflow. When possible, ask a vendor for a demonstration, especially with smaller devices. “For large instrumentation, looking

to connected lab capabilities can be key,” Thomas explains. “Our Thermo Fisher Connect enables labs to boost productivity with secure, remote access to data and instruments.”

A family tradition

Some solutions to chromatography start at home. In an Apple-like beginning, Phillip James started a chromatography company in a back room of his house in 1994, and he named it Cambridge Scientific Instruments. The first products were chromatography accessories, including an electronic programmable pressure controller for gas chromatography (GC) and a vacuum degasser for LC. Today, his company is known as Ellutia (Ely, UK), and it makes complete GC platforms, including the 200 Series GC. The founder’s son and marketing director at Ellutia, Andrew James, says, “We try to fill a niche, producing custom solutions and systems for people to solve problems that other manufacturers are not willing to solve.”

For custom or off-the-shelf solutions, GC can create various workflow obstacles. “As GC manufacturers,” says James, “we see several stages of GC analysis, all of which have potential bottlenecks.” Those bottlenecks start at the beginning, with sample preparation. “Getting samples to the stage where they can be introduced to GC can be a long-winded process and very manual,” James explains. This stage involves mixing chemicals, processing the sample, and so on—all of which can slow down a workflow or make it less repeatable. In an ideal scenario, a user places the raw sample into a vial, and then an automated system completes the sample prep, introduces the sample to the GC, completes the analysis, and produces the report, all with the press of a single button, but that capability remains futuristic.

The GC platform matters, too, in workflow. GC comes in three main forms: conventional GC, the traditional column in an oven, which runs in from 30 minutes to a couple of hours; fast GC, which uses an oven with shorter and narrower columns to reduce the analysis time, but at the cost of sample capacity and detection limits; and ultrafast GC, which uses rapid direct heating of a column with electric current, reducing the run time to five or six minutes. “This is great for method development, where you try something, change something, and try it again,” James explains, “because you can do it faster and easier.” Later in 2017, Ellutia will release a GC platform that can do all three techniques.

After running a GC, data become the next potential bottleneck. Depending on a lab’s needs, this stage can range from software that comes with a GC platform to implementation of a full laboratory information management system (LIMS), which can automate many steps in the process.

Beyond helping customers address a range of slow spots in a GC process, Ellutia even designs custom solutions. “This covers quite a broad range,” James says, “from a customer looking at permeation of rubber gloves to a nuclear power station monitoring reactor gases to a portable ultrafast GC to do on-site analysis at gas stations.” In thinking about tackling such diverse problems, James says, “It’s fun and interesting because you get your eyes opened to different industries and things you might never have dreamed of when we first started this company.”

Keep it organized

For any workflow, organization adds efficiency. “To develop efficient workflows, organizations need flexible software solutions that can adapt to the way labs are performing their work,” says Klemen Zupancic, CEO at sciNote (Middleton, WI). The solution needs to be flexible enough to handle a range of scales and lab processes.

An organizational tool must also communicate with software on various platforms. “The best solution will not reside in one piece of software but rather a network of systems,” Zupancic says. So his company developed sciNote—a free electronic lab notebook—as modular, open-source software. “We encourage interoperability between different software solutions and lab instruments,” he says.

Other tools for connecting technologies also exist. As Garrett Mullen, program product owner for informatics at Waters (Milford, MA), says, “There are technologies available on the market that provide for the interfacing of simple devices, like balances and pH meters, that can automatically capture and store data, ensuring correct digits and units of measure are captured.”

Other digital tools help scientists manage automated workflows, and these tools range from simple to complex. “An example of a simple technology is valuation tools on a digital form that compare actual results to desired or target results at time of capture,” says Mullen. “These tools can be as simple as a green check or a red X that indicates whether an expected result or value is in or out of specification.”

Where to start

To improve any workflow, it helps to know where to start. A good place is the most repetitive process in a lab. “Measure the process from beginning to end and record all of the manual data entry and recording steps, looking at each review step as well as the number of sign-offs required,” Mullen suggests. “Identify which tasks can be automated or eliminated and which are most frequently a source of error.”

Some tools make it easier to manage automated workflows, such as this example that includes a ‘green check’ or a ‘red x’ to indicate when a result is in or out of specification.Image courtesy of Waters

Some tools make it easier to manage automated workflows, such as this example that includes a ‘green check’ or a ‘red x’ to indicate when a result is in or out of specification.Image courtesy of Waters

With a key bottleneck identified, think about ways to automate it. “Not all processes can be automated, but do not be discouraged,” Mullen says. “Work with the ones that can, and you will have more time available to improve those processes that cannot be automated.”

It’s not just the mechanical work but also keeping track of information that matter. That means keeping together information from a workflow. “For example, scientists often need to account for which samples were included in a specific part of the experiment and which results they generated,” Zupancic explains. “In addition, they should also track which instrument was used to generate their raw data as well as who analyzed and confirmed the results, and when.”

So, getting the most from a specific workflow requires analyzing it. After that, a scientist must imagine an improvement, find the technology to make it happen, and then test the outcome.