A collaborative effort led by Argonne National Laboratory has resulted in a real-time AI engine designed to process the massive influx of data generated by advanced imaging facilities. This breakthrough addresses a significant bottleneck in scientific research: the delay between data collection and analysis. By deploying high-performance computing capabilities directly at the source, researchers can now receive immediate feedback on experiments, potentially saving weeks of manual review and reducing resource waste.

Implementing real-time AI for high-throughput imaging

The volume of data produced by modern light sources and electron microscopes is staggering. Traditionally, the sheer speed of information generation has outpaced human ability to keep up, leading to delays in turning datasets into usable insights. This offline workflow often meant that if a sample was misaligned or a setting was incorrect, the error was not discovered until the experimental session had ended and the window for adjustment had closed.

To solve this, a multilab team developed the Synergistic Neutron and Photon Science - Intelligence (SYNAPS-I) AI platform. Unlike general-purpose systems, this billion-parameter foundation model is built to think like the imaging tools themselves by incorporating the physics of coherent imaging directly into the model. The system was successfully tested using ptychography—an X-ray technique that reconstructs sharp, high-resolution images from overlapping diffraction patterns.

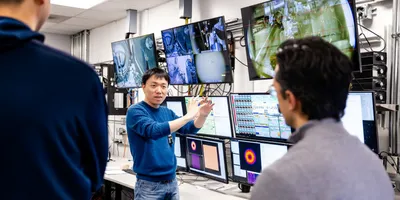

The engine was tested at the Advanced Photon Source (APS) and the Center for Nanoscale Materials (CNM), both of which are Department of Energy Office of Science user facilities. By integrating the AI directly into the instrument's workflow, the system can perform complex reconstructions in seconds rather than hours or days.

Practical benefits of edge computing in the laboratory

The primary advantage of this technology is the shift to edge computing—processing data at or near the source. This shift offers several operational benefits:

- Reduced data storage costs by allowing models to be refined using high-performance computing resources while maintaining rapid analysis at the beamline

- Increased instrument availability by enabling the real-time identification of defects in materials, ensuring only successful runs proceed

- Faster turnaround times for multi-user facilities, with testing showing results are processed 100 times faster than traditional experiments without AI

- Improved accuracy in automated workflows through a cognitive partner capable of detecting subtle correlations and generating hypotheses

Mathew Cherukara, leader of the Argonne SYNAPS-I team, emphasizes that the platform helps turn facilities into truly intelligent, self-driving laboratories. The model is trained on data from more than 100 beamlines, ensuring it can scale across the entire Department of Energy complex.

Strategic data management and laboratory efficiency

Integrating these AI tools requires a shift in how laboratory leaders approach digital infrastructure. Rather than viewing data processing as a post-experimental task, it must be integrated into the experimental design itself. This real-time capability allows researchers to make informed decisions on the fly, such as guiding manufacturing processes or identifying technologically impactful materials instantly.

By adopting these advanced workflows, laboratories can maintain a competitive edge in fields ranging from microelectronics to biomedical research. The ability to process data at the speed of collection ensures that the human element of the lab—the researchers and technicians—can focus on interpreting results rather than managing data backlogs.

This article was created with the assistance of Generative AI and has undergone editorial review before publishing.