Developing novel alloys, advanced ceramics, and next-generation semiconductors relies fundamentally on accurate elemental analysis. For laboratory managers overseeing advanced materials development, quantifying both major matrix components and ultra-trace impurities is a daily operational hurdle. Even minute variations in elemental composition can drastically alter a material's thermal stability, electrical conductivity, or tensile strength, making precise characterization a non-negotiable step in the research and development pipeline.

In today's highly competitive manufacturing landscape, the pressure to accelerate time-to-market for new materials places immense strain on analytical laboratories. Lab managers are tasked with processing a higher volume of complex solid samples while simultaneously pushing the boundaries of detection limits. The financial implications are significant; a delayed analytical result can stall an entire production line, while an inaccurate reading can lead to catastrophic material failure in the field.

However, analyzing solid materials presents a distinct set of laboratory challenges compared to testing aqueous solutions. Lab managers must navigate complex sample preparation protocols, manage severe spectral interferences inherent to dense solid matrices, and ensure that their chosen instrumentation can meet increasingly stringent detection limits. Balancing these high-throughput demands with the acute need for extreme precision requires a strategic approach to workflow design, contamination control, safety protocols, and equipment procurement.

Why is trace elemental analysis critical for advanced materials?

In the realm of advanced materials, the distinction between a high-performance product and a failed batch often comes down to parts-per-million (ppm) or parts-per-billion (ppb) elemental variations. In lithium-ion battery development, for example, trace heavy metal impurities like iron or copper in cathode precursors can lead to severe performance degradation, reduced cycle life, or catastrophic thermal runaway. Similarly, in the aerospace sector, the precise ratio of refractory metals in superalloys determines the material's ability to withstand extreme turbine temperatures without yielding or fracturing.

The rapid expansion of additive manufacturing (3D printing) has further intensified the need for rigorous elemental profiling. Metal powders used in these processes must meet strict compositional tolerances before they are loaded into a printer. As these powders are recycled and reused across multiple printing cycles, they risk accumulating oxygen, nitrogen, or cross-contaminating metals from the printing chamber. These invisible elemental shifts can severely compromise the mechanical integrity and fatigue resistance of the final printed part.

Likewise, the semiconductor industry relies on ultra-trace elemental analysis to verify deliberate dopant concentrations and identify infinitesimally small rare-earth impurities. Even parts-per-trillion (ppt) contamination can disrupt the electrical properties of silicon wafers, rendering expensive microchips entirely defective. Quality control (QC) laboratories must continuously monitor these elemental profiles to verify that materials meet strict engineering specifications. This requires instrumentation capable of resolving trace signals against a highly concentrated, complex background matrix. Failure to accurately detect these elements can result in compromised downstream manufacturing, costly product recalls, and severe damage to the laboratory’s analytical reputation.

Controlling the laboratory environment for trace analysis

When an analytical laboratory pushes its detection limits into the ppb and ppt ranges, the physical laboratory environment itself becomes a primary source of contamination. For lab managers, achieving accurate results for advanced materials means investing as much in facility infrastructure as in the analytical instruments themselves. Ubiquitous environmental elements—such as iron, zinc, aluminum, and calcium—are present in airborne dust, standard laboratory glassware, and even the skin and cosmetics of the analysts.

To prevent background contamination from ruining an analytical batch, laboratories must implement rigorous environmental controls. Key contamination control strategies include:

- Air quality management: Upgrading the sample preparation area with positive-pressure HEPA filtration or installing specialized Class 100 (ISO 5) laminar flow hoods. This prevents airborne particulates from settling into open digestion vessels.

- Reagent purity: Standard analytical-grade acids are entirely insufficient for trace analysis. Laboratories must utilize high-purity (often sub-boiling distilled) acids, generally labeled as "trace metal" or "environmental" grade, to prevent reagent-based contamination. Lab managers must factor the significantly higher cost of these high-purity reagents into their cost-per-sample calculations.

- Specialized labware: Traditional borosilicate glassware can leach heavy metals into highly acidic samples over time. Facilities conducting trace materials analysis must transition to specialized fluoropolymer (PTFE or PFA) or ultra-clean polypropylene containers. Furthermore, all labware requires rigorous, documented acid-leaching protocols prior to use to ensure a completely inert sample pathway.

Overcoming sample preparation bottlenecks for solid matrices

The accuracy of any elemental analysis is intrinsically linked to the quality of sample preparation. Unlike water testing, extracting analytes from solid materials like advanced ceramics, geological ores, or specialized polymers requires aggressive, labor-intensive techniques. Deciding between destructive and non-destructive preparation is one of the first workflow decisions a lab manager must make, as it directly impacts turnaround time and laboratory safety.

For techniques requiring liquid samples, such as Inductively Coupled Plasma Optical Emission Spectroscopy (ICP-OES) or ICP Mass Spectrometry (ICP-MS), solid materials must undergo complete digestion. This often involves:

- Microwave digestion: Utilizing strong, high-purity acids (such as nitric or hydrochloric acid) inside sealed vessels under high heat and pressure to break down the material matrix. Closed-vessel microwave digestion has largely replaced traditional open hot-block digestions because it prevents the volatilization of crucial elements like arsenic and mercury, significantly reduces sample preparation time, and consumes fewer expensive high-purity acids.

- Hydrofluoric acid (HF) protocols: Silicate-based materials and advanced ceramics frequently require HF for complete dissolution. HF presents extreme and unique safety hazards, as it can penetrate tissue and cause systemic toxicity. Requiring its use means lab managers must implement specialized fume hood ventilation, highly stringent personal protective equipment (PPE) requirements, and dedicated emergency response protocols (including the immediate availability of calcium gluconate gel).

- Dilution and filtration: Highly concentrated digests must be carefully diluted and filtered to prevent dissolved solids from clogging delicate instrument nebulizers or suppressing analyte signals within the plasma.

Because sample digestion represents a significant workflow bottleneck and introduces profound safety risks, many materials laboratories are investing in automated digestion blocks and automated liquid handlers. These robotic platforms improve analytical reproducibility, limit technician exposure to hazardous acid fumes, and increase overall sample throughput by operating unattended overnight.

Choosing the right analytical technique for materials testing

Selecting the appropriate instrumentation requires a careful evaluation of the laboratory’s analytical goals, capital budget, and desired turnaround times. Lab managers must look beyond the initial purchase price and consider the ongoing operational expenses—including consumables like ultra-high-purity argon gas, detector replacements, and routine maintenance contracts.

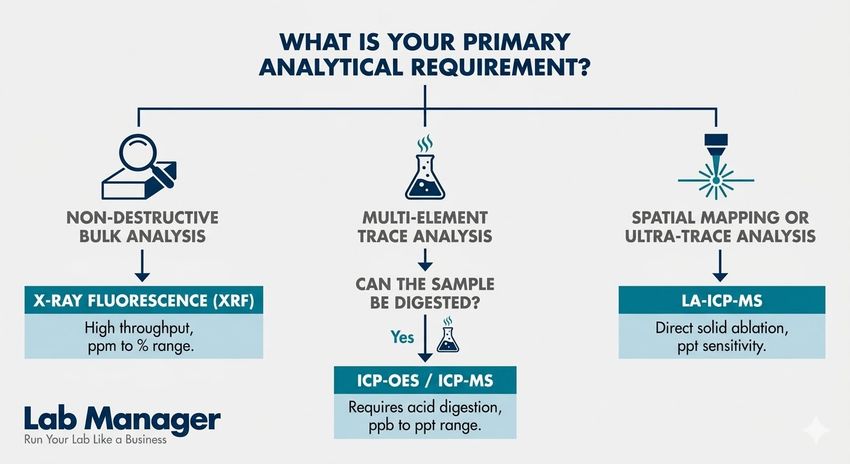

Lab managers generally evaluate three primary technologies for materials characterization: X-ray Fluorescence (XRF), ICP-OES, and Laser Ablation Inductively Coupled Plasma Mass Spectrometry (LA-ICP-MS).

Table 1: Comparison of elemental analysis techniques for solid materials.

Analytical Technique | Sample Preparation | Detection Limits | Throughput | Best Application |

|---|---|---|---|---|

X-Ray Fluorescence (XRF) | Minimal (non-destructive solid analysis) | Parts per million (ppm) to percentage (%) | Very High | Bulk compositional analysis; high-throughput screening of alloys and powders. |

ICP-OES | Extensive (requires complete liquid digestion) | Parts per billion (ppb) to parts per million (ppm) | High | Multi-element trace analysis; routine QC for materials that can be easily digested. |

LA-ICP-MS | Minimal (direct solid ablation) | Parts per trillion (ppt) to parts per billion (ppb) | Medium | Ultra-trace impurity profiling; spatial mapping and depth profiling of solid surfaces. |

XRF is often the workhorse of the materials testing lab. Due to its non-destructive nature and minimal sample preparation requirements, it is highly cost-effective and ideal for rapid quality control on the manufacturing floor. However, XRF struggles with lighter elements and cannot reach the trace-level detection limits required for specialized applications.

When measuring trace-level impurities in a complex matrix, ICP-OES provides a robust middle ground, capable of handling high levels of total dissolved solids (TDS) without suffering excessive signal suppression. However, when researchers need to quantify ultra-trace impurities down to the ppt level, or map the spatial distribution of elements across a semiconductor wafer without dissolving the sample, the superior sensitivity of LA-ICP-MS becomes necessary. While LA-ICP-MS bypasses the need for hazardous acid digestions, it introduces significant capital costs and requires highly specialized operators to maintain the laser optics and interpret the complex mass spectra.

A quick decision guide for selecting the appropriate elemental analysis technique based on sample characteristics and detection limits.

GEMINI (2026)

Managing data integrity and analytical compliance

Running the instrument correctly is only a fraction of the lab manager's responsibility; proving the validity of the data is equally critical. In advanced materials development, laboratories must frequently align with international quality standards, such as ISO/IEC 17025, to ensure testing competence and traceability. This requires meticulous record-keeping, strict calibration routines, and an unwavering commitment to data integrity.

To prove analytical accuracy, laboratories must routinely analyze Standard Reference Materials (SRMs) provided by organizations like NIST alongside their routine samples. Because matrix effects in solid materials can severely suppress or enhance instrumental signals (known as physical and spectral interferences), utilizing matrix-matched reference materials and introducing internal standards during the instrument run are essential steps for accurate calibration.

Handling the vast amount of spectral data, calibration curves, and quality control metrics generated by modern spectrometers requires a centralized Laboratory Information Management System (LIMS). Relying on manual transcription or fragmented spreadsheets introduces a high risk of human error and makes regulatory compliance nearly impossible.

A properly configured LIMS directly integrates with the lab’s analytical instruments—including connected analytical balances used during sample prep—to automate data transfer. The software tracks the expiration dates of reference materials, monitors instrument performance over time to schedule preventative maintenance, and flags QC failures in real-time. By maintaining a secure, unalterable 21 CFR Part 11-compliant audit trail for every sample, lab managers can ensure complete data integrity, drastically simplify the regulatory auditing process, and accelerate the release of new materials to the market.

Conclusion: Optimizing your lab for advanced materials characterization

Supporting advanced materials development requires a highly optimized, end-to-end elemental analysis workflow. Lab managers must proactively address the severe bottlenecks associated with solid sample digestion, ensure rigorous safety protocols for hazardous reagents like hydrofluoric acid, and control the laboratory environment to prevent background contamination. Furthermore, investing in the analytical technology that best fits both the required detection limits and the laboratory’s operating budget is paramount for long-term success. By matching the right instrumentation—whether XRF, ICP-OES, or LA-ICP-MS—to the specific material matrix, and backing those workflows with robust, automated LIMS data management, laboratories can deliver the rapid, high-precision insights needed to drive materials innovation forward without compromising safety or compliance.

This article was created with the assistance of Generative AI and has undergone editorial review before publishing.