Routine environmental monitoring relies heavily on elemental analysis to detect and quantify heavy metals in our ecosystems. For laboratory managers overseeing environmental testing facilities, processing soil and water samples presents a complex workflow of operational challenges. High-throughput demands must be carefully balanced against the need for extreme accuracy, particularly when detecting trace elements like lead, arsenic, cadmium, and mercury.

Your lab must navigate a matrix of regulatory requirements while maintaining operational efficiency. Whether evaluating a municipal water supply or analyzing soil from an industrial brownfield, selecting the right analytical methodology directly impacts both your laboratory's turnaround time and its cost per sample. Lab managers must routinely balance the demands of high sample volume against the acute need for precision to protect the lab's profitability and reputation. Understanding the nuances of sample preparation, contamination control, instrument capabilities, and data management is essential for optimizing laboratory performance.

What are the regulatory drivers for heavy metal testing in soil and water?

Environmental testing laboratories must adhere to strict regulatory frameworks that dictate permissible limits for heavy metals. In the United States, the Environmental Protection Agency (EPA) specifies approved analytical methods for drinking-water compliance and publishes SW-846 guidance methods widely used in RCRA-related testing.

For drinking water, laboratories must use methods approved under 40 CFR Part 141, such as EPA Method 200.8 for ICP-MS where applicable. For hazardous-waste and certain solid-matrix applications, SW-846 methods such as EPA Method 6020B may apply. Compliance requires not only achieving the required limits of detection (LOD) but also maintaining extensive documentation of instrument calibration, maintenance, and operator training. Failure to meet these stringent requirements can result in invalidated data, loss of accreditation, and severe legal consequences for the facility relying on the results.

How do you overcome sample preparation challenges in soil and water testing?

The accuracy of any elemental analysis is fundamentally limited by the quality of sample preparation. Water samples are generally straightforward but often require acid preservation and filtration to differentiate between dissolved and total recoverable metals, following guidelines similar to the USGS National Field Manual for the Collection of Water-Quality Data.

Conversely, soil samples require aggressive extraction techniques to transition solid-phase metals into a liquid solution suitable for instrumental analysis. This process is notoriously labor-intensive and represents a major bottleneck in sample turnaround times.

Common sample preparation steps for solid matrices include:

- Drying and homogenization: Removing moisture and grinding the soil to ensure a representative subsample.

- Acid digestion: Utilizing strong acids (like nitric and hydrochloric acid) combined with heat. Methods like EPA Method 3050B are standard for the digestion of sediments, sludges, and soils.

- Microwave digestion: Microwave digestion methods such as EPA 3051A for soils and EPA 3015A for certain aqueous samples can reduce turnaround time and acid use compared with conventional hot-block digestion.

- Filtration and dilution: Removing remaining silicates and particulates that could clog instrument nebulizers.

To alleviate these bottlenecks, many high-throughput laboratories are adopting automated digestion blocks and robotic liquid handling systems. These platforms not only increase sample throughput but also reduce technician exposure to hazardous acid fumes and minimize human error during the critical pipetting phases.

How can labs prevent environmental contamination during trace analysis?

Achieving the ultra-trace detection limits required by modern environmental regulations demands meticulous contamination control. When analyzing drinking water at parts-per-trillion levels, the laboratory environment itself becomes a primary source of background interference. Lab managers must invest in specialized infrastructure and consumables to protect sample integrity.

Key contamination control considerations include:

- Air quality control: Utilizing positive-pressure cleanrooms or HEPA-filtered laminar flow hoods for sample preparation is highly recommended to prevent airborne dust from introducing ubiquitous elements like iron, zinc, or lead into the sample blanks.

- Reagent purity: Standard analytical-grade acids are insufficient for trace analysis. Laboratories must utilize high-purity (often sub-boiling distilled) acids, generally labeled as "trace metal" or "environmental" grade, to prevent reagent-based contamination.

- Labware management: Traditional glassware can leach heavy metals into acidic samples over time. Facilities must transition to specialized fluoropolymer (PTFE or PFA) or ultra-clean polypropylene containers. Furthermore, all labware requires rigorous acid-leaching protocols prior to use to ensure a completely inert sample pathway.

ICP-MS vs. ICP-OES vs. AAS: Which elemental analysis technique is right for your lab?

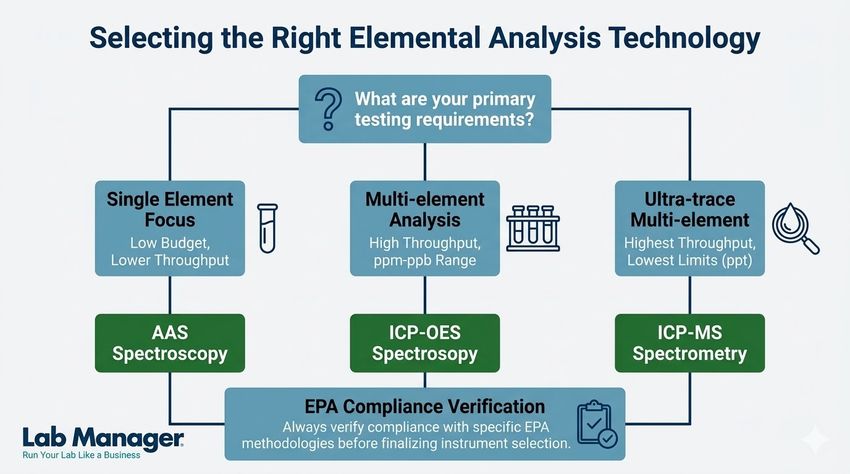

Selecting the appropriate analytical technique depends on the required detection limits, sample volume, and capital budget. Laboratory managers typically choose between three primary technologies: atomic absorption spectroscopy (AAS), inductively coupled plasma-optical emission spectroscopy (ICP-OES), and ICP-MS.

Table 1 summarizes the operational differences between these common elemental analysis technologies.

Table 1: Comparison of common elemental analysis techniques.

Analytical Technique | Detection Limits | Sample Throughput | Capital Cost | Best Application |

|---|---|---|---|---|

AAS (Flame/Graphite) | Parts per million (ppm) to parts per billion (ppb) | Low to Medium | Low | Single-element analysis; smaller labs with lower budgets. |

ICP-OES | Parts per billion (ppb) | High | Medium | Multi-element screening; high-throughput soil and wastewater labs. |

ICP-MS | Parts per trillion (ppt) | High | High | Ultra-trace multi-element analysis; drinking water compliance. |

For ultra-trace level detection required in drinking water analysis, ICP-MS is the gold standard due to its exceptional sensitivity and isotopic capabilities. However, for heavily contaminated soil or wastewater where analyte concentrations are high, ICP-OES often provides a more robust and cost-effective solution with fewer polyatomic interferences.

quick reference guide to matching your lab's budget, throughput, and trace-level detection requirements with the appropriate analytical instrument.

GEMINI (2026)

How do you overcome spectral and physical interferences during instrumental analysis?

Even with pristine sample preparation, the complex chemical matrices of soil digests and wastewater introduce significant challenges during instrumental analysis. Laboratory personnel must routinely manage spectral and physical interferences to prevent false positive results or suppressed analyte signals.

Physical interferences occur when high concentrations of total dissolved solids (TDS) change the sample's viscosity, altering the efficiency of the sample introduction system. To correct for these transport variations, laboratories must utilize internal standards—elements like scandium, yttrium, or bismuth that are added at a constant concentration to all blanks, standards, and samples. The instrument software monitors the internal standard recovery and mathematically corrects the analyte signals to account for matrix-induced suppression.

Spectral interferences are particularly problematic in ICP-MS. Polyatomic interferences occur when sample matrix components combine with the argon plasma gas to form ions with the same mass-to-charge ratio as the target analyte. A classic example is the combination of argon and chloride (ArCl+), which mimics the mass of arsenic (75 As), potentially leading to vastly inflated arsenic reporting in high-chloride samples. Modern ICP-MS systems combat this using collision/reaction cell technology (such as Kinetic Energy Discrimination, or KED mode). This technology introduces an inert gas like helium into a cell before the mass analyzer, allowing the system to selectively filter out these larger polyatomic ions before they reach the detector, ensuring accurate quantification.

Why are quality control and a LIMS essential for heavy metal testing?

Running the instrument is only half the battle; ensuring the validity of the data requires a rigorous quality control (QC) program. Environmental laboratories must routinely analyze method blanks, laboratory control samples (LCS), matrix spikes, and calibration verification standards to prove the analytical batch is compliant.

Managing this sheer volume of analytical and QC data necessitates a robust Laboratory Information Management System (LIMS). A properly configured LIMS automates data transcription from the instrument software, flags QC failures in real-time, and generates compliance-ready reports. Beyond basic flagging, modern informatics solutions ensure complete traceability. Every action, from initial sample receipt to final data approval, is logged in an unalterable audit trail. This level of data integrity is essential during regulatory audits and helps lab managers quickly identify the root cause of QC failures, minimizing the expensive downtime associated with sample re-runs. Implementing automated data workflows minimizes human error and significantly reduces the administrative burden on your scientific staff.

Conclusion: Optimizing your environmental lab for heavy metal testing

Effective elemental analysis of soil and water requires a strategic approach to laboratory operations. Lab managers must ensure that their facilities utilize appropriate sample preparation protocols, select the correct analytical instrumentation for their specific detection limits, and maintain rigorous quality control and contamination standards. By aligning analytical capabilities with regulatory requirements and investing in automated data management, laboratories can improve sample throughput, reduce long-term operational costs, and confidently deliver accurate, legally defensible environmental data.

This article was created with the assistance of Generative AI and has undergone editorial review before publishing.