WACO, TX — May 28, 2021 — About 36 million people have blindness including 1 million children. Additionally, 216 million people experience moderate to severe visual impairment. However, STEM (science, technology, engineering, and math) education maintains a reliance on three-dimensional imagery for education. Most of this imagery is inaccessible to students with blindness. A breakthrough study by Bryan Shaw, PhD, professor of chemistry and biochemistry at Baylor University, aims to make science more accessible to people who are blind or visually impaired through small, candy-like models.

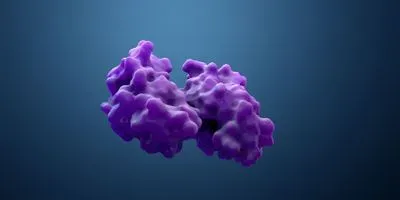

The Baylor-led study, published May 28 in the journal Science Advances, uses millimeter-scale gelatin models—similar to gummy bears—to improve visualization of protein molecules using oral stereognosis, or visualization of 3D shapes via the tongue and lips. The goal of the study was to create smaller, more practical tactile models of 3D imagery depicting protein molecules. The protein molecules were selected because their structures are some of the most numerous, complex, and high-resolution 3D images presented throughout STEM education.

"Your tongue is your finest tactile sensor—about twice as sensitive as the finger tips—but it is also a hydrostat, similar to an octopus arm. It can wiggle into grooves that your fingers won't touch, but nobody really uses the tongue or lips in tactile learning. We thought to make very small, high-resolution 3D models, and visualize them by mouth," Shaw said.

The study included 396 participants in total—31 fourth- and fifth-graders as well as 365 college students. Mouth, hands, and eyesight were tested at identifying specific structures. All students were blindfolded during the oral and manual tactile model testing.

Each participant was given three minutes to assess or visualize the structure of a study protein with their fingertips, followed by one minute with a test protein. After the four minutes, they were asked whether the test protein was the same or a different model than the initial study protein. The entire process was repeated using the mouth to discern shape instead of the fingers.

Students recognized structures by mouth at 85.59 percent accuracy, similar to recognition by eyesight using computer animation. Testing involved identical edible gelatin models and nonedible 3D-printed models. Gelatin models were correctly identified at rates comparable to the nonedible models.

Related Article: Are Women and Minorities Well Represented in STEM?

"You can visualize the shapes of these tiny objects as accurately by mouth as by eyesight. That was actually surprising," Shaw said.

The models, which can be used for students with or without visual impairment, offer a low-cost, portable, and convenient way to make 3D imagery more accessible. The methods of the study are not limited to molecular models of protein structures—oral visualization could be done with any 3D model, Shaw said.

Additionally, while gelatin models were the only edible models tested, Shaw's team created high-resolution models from other edible materials, including taffy and chocolate. Certain surface features of the models, like a proteins pattern of positive and negative surface charge, could be represented using different flavor patterns on the model.

"This methodology could be applied to images and models of anything, like cells, organelles, 3D surfaces in math, or 3D pieces of art—any 3D rendering. It's not limited to STEM, but useful for humanities too," said Katelyn Baumer, doctoral candidate and lead author of the study.

Shaw's lab sees oral visualization through tiny models as a beneficial addition to the multisensory learning tools available for students, particularly those with extraordinary visual needs. Models like the ones in this study can make STEM more accessible to students with blindness or visual impairment.

"Students with blindness are systematically excluded from chemistry, and much of STEM. Just look around our labs and you can see why—there is Braille on the elevator button up to the lab and Braille on the door of the lab. That's where accessibility ends. Baylor is the perfect place to start making STEM more accessible. Baylor could become an oasis for people with disabilities to learn STEM," Shaw said.

Shaw isn't new to high-profile research related to visual impairment. He has received recognition for his work on the White Eye Detector app. Shaw and Greg Hamerly, PhD, associate professor of computer science at Baylor, built the mobile app which serves as a tool for parents to screen for pediatric eye disease. Shaw's inspiration for the app came after his son, Noah, was diagnosed with retinoblastoma at four months of age.

- This press release was originally published on the Baylor University Media and Public Relations website. It has been edited for style